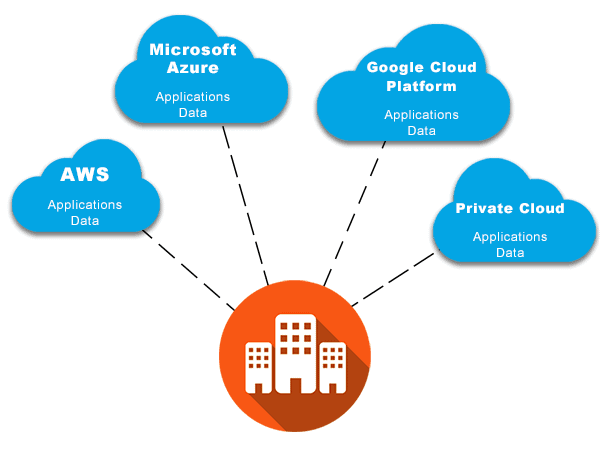

The Public Cloud is a solution that consists of hosting data on industrial data centers that are easily accessible and administered by private companies such as Microsoft, Google, Amazon Web Services, IBM, OVH or Salesforce.

These servers are publicly accessible, in self-service, from any terminal connected to the Internet. Thus, all resources (servers, power, active network elements…) are shared among all users.

The main advantage of the Public Cloud lies in the simplicity and speed of its implementation. A credit card and a few clicks are enough to store your data on a public cloud service. For a company, it is nevertheless necessary to supervise and professionalize this approach, in particular to avoid Shadow IT. This is why more and more IT departments are using the services of IT outsourcing companies.

The strong reactivity and adaptability of the Public Cloud make it a very popular solution.

The implementation of a Public Cloud solution is the best way to benefit from a very fast operational and efficient information system, especially for “turnkey” services such as office suites, CRM, HR suites…

1 Private Cloud

The Private Cloud is a solution consisting of hosting data on servers dedicated to the needs of a single company. These servers can be physically installed in the company’s premises or in a specialized data center.

By definition, the Private Cloud is a tailor-made solution that guarantees flexibility and scalability. The CIO and operational teams can build their architecture with infinitely fewer constraints than with a public cloud provider.

The other strong point of the Private Cloud is its high level of security. Since the data is physically isolated, it logically offers less visibility. This solution is particularly suitable for companies with high confidentiality or data protection requirements that want to control the security chain themselves.

Large enterprises often build their private clouds in their own data centers, to offer their employees and partners the same flexibility, responsiveness and scalability as public clouds, but in a completely controlled environment. Generally speaking, the simplest solution is still to opt for an external private cloud, which allows the company to free itself from hardware investment issues.

2 On Premise

The On Premise solution (“On prem” in the language of IT departments) consists of physically hosting the servers and therefore all of the company’s data on its own premises. It involves the company investing in its own technical equipment.

On Premise can be an alternative to the Cloud for infrastructure (IaaS: Infrastructure as a Service) but also for software and licenses (SaaS: Software as a Service). The company acquires its equipment for an unlimited period.

The main advantage of On Premise is to have total control over its data. The CIO relies only on himself and his teams and is not dependent on an external provider. Since the data is hosted within the company, it is always accessible regardless of the quality of the Internet connection. Finally, the level of security and confidentiality is logically maximal.

3 Outsourced (virtualized) hosting

Outsourced hosting consists of having your servers hosted in a professional data center, managed and operated by a digital service provider.

Although some of them will offer to host physical servers, in this white paper we will only deal with virtualized hosting, which offers more flexibility and scalability than hosting physical machines.

Technically, virtualization consists in splitting a single real server into several completely independent virtual servers. To do this, a virtualization software solution is used, generally called a Hypervisor.

Outsourced Hosting allows the company to benefit from the advantages of a dedicated environment, without having to manage all the tasks of maintaining this infrastructure in operational conditions.

All the maintenance operations of the cooling system, active network elements and security are managed by the provider. In most cases, the provider will also offer a number of maintenance tasks on the server, leaving the CIO to focus on these higher value projects.

Cloud infrastructures are also used in the crypto industries for Blockchains as explained here https://www.cloudactu.fr/partenariat-cloud-blockchain-vechain-et-amazon-aws/

You’re going to want to find a host that is well reviewed before you work with them. There are many websites offering quality reviews out there such as

You’re going to want to find a host that is well reviewed before you work with them. There are many websites offering quality reviews out there such as

Here are 5 benchmarks we selected to see which browser is better:

Here are 5 benchmarks we selected to see which browser is better:

Two types of OBD scanners are available, the “code reader” and the “Scan tool” scanners. The code reader scanners can read and clear necessary codes in your car while the scan tool scanners can perform functions that are more advanced.

Two types of OBD scanners are available, the “code reader” and the “Scan tool” scanners. The code reader scanners can read and clear necessary codes in your car while the scan tool scanners can perform functions that are more advanced.